The first round of the crazy Naughty Dark Contest already has not one, but two special prize winners! And these lucky guys are both from Crash’s home country, Australia.

Tyson Cleary of Tasmania

and

William Errey from Perth

For more info on the contest, a detailed list of prizes and rules can be found here!

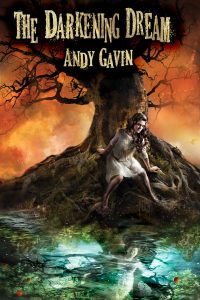

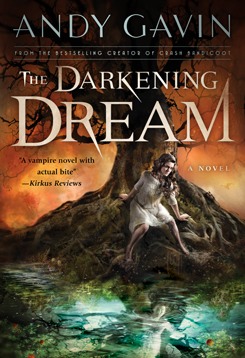

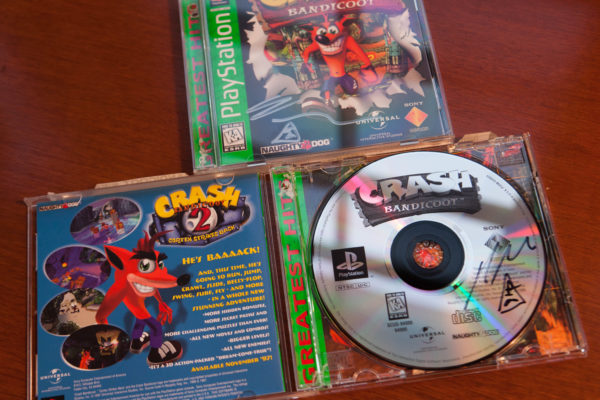

Both guys also wanted copies of the original Crash Bandicoot and here they are prior to shipping. I signed both cover and CD, including my special unforgable “symbol.” Yes, like Prince, I have a symbol. But you’ll have to ask the Painted Man what it means.

Thank you both immeasurably!

It’s also worth noting that this has made the virtual hat for the first round even more lucrative for the rest of you. Due to their prize winning each first round ticket is worth at least a 2% chance of winning a prize now — and if someone else claims a special prize, it could be even greater. So read up on the rules and participate.